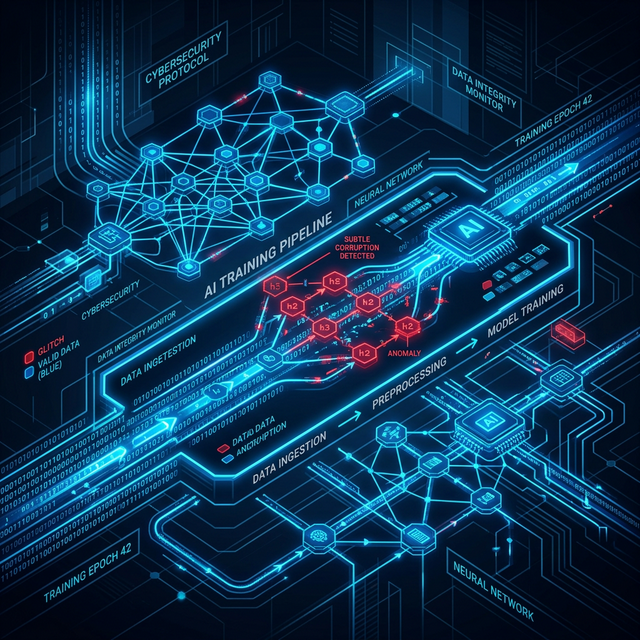

Artificial Intelligence has fundamentally changed how we process logs and identify threats at scale. We now rely heavily on machine learning models to establish baselines of "normal" behaviour and flag anomalies within those massive datasets. Yet, this reliance poses a critical, often ignored question: what exactly happens when the baseline itself is compromised?

Most security leaders view their telemetry ingestion pipelines as trusted internal structures. We invest millions into securing the perimeter, configuring strict robust Identity and Access Management, and tuning our SIEM alerts, but we rarely pause to audit the integrity of the raw data flowing into our most advanced detection algorithms.

The Boiling Frog Scenario

Data poisoning is an insidious risk where adversaries subtly manipulate the training data fed into our security models. Rather than launching a loud, smash-and-grab breach to exfiltrate a database, they slowly, methodically inject malicious activity into the training pipeline over an extended period.

The rationale behind this is simple but devastating: if an attacker can trick the AI into classifying their specific attack signatures as entirely benign, they effectively render the defence mechanism totally blind to their presence.

It is the digital equivalent of a boiling frog. The model's perception of "normal" shifts so gradually that the security team never notices the water getting hotter. By the time a ransomware payload executes, or lateral movement triggers a manual human intervention, the AI has already categorised the entire kill chain as standard weekend service behaviour.

I've seen environments where seemingly minor data ingestion errors—such as a misconfigured log forwarder failing to parse essential IP addresses—led to an entirely flawed machine learning baseline. Now, imagine that disruption taking place intentionally, guided by a highly resourced state-backed group. The potential blast radius is catastrophic.

Recognising the Symptoms of a Poisoned Model

How do you know if your model is fundamentally compromised? It is remarkably difficult to spot manually, but certain leading indicators exist: * Gradual Alert Decay: A slow, continuous drop-off in high-fidelity alerts outputted by the model over months, without a corresponding decrease in network traffic. * Classification Flip-Flops: Specific domains or binaries that continuously oscillate between being marked "Suspicious" and "Clean" during retraining phases. * The "Goldilocks" Attack: Threat actor behaviours that sit precisely on the edge of the model's confidence thresholds, intentionally crafted to stay just underneath the trigger point.

The Mitigation Strategy

To defend against data poisoning, we must treat our training data with the exact same level of suspicion as an external network request from an untrusted IP. Pragmatic defence is not about purchasing more advanced algorithms; it is about protecting the integrity of the data those algorithms rely upon.

The key architectural principles include:

- Strict Data Provenance: We cannot treat our training datasets as static or inherently trustworthy. We must track exactly where data originates, who has modified it, and how it is labelled prior to ingestion into the training lake. Cryptographic signing of key log sources is no longer overkill.

- Ensemble Methods: Avoid reliance on a single, monolithic model. Utilising multiple distinct models—trained on disparate datasets and varied features—to cross-check each other ensures that a single poisoned data pipeline cannot compromise the entire defence strategy.

- Immutable Historical Baselines: Always maintain a highly secure, immutable archive of known-good "clean" baselines to periodically benchmark current model behaviour against. If the divergence is sudden or unexplained, the current model must be quarantined.

- Human-in-the-Loop: Maintain expert oversight for any critical baseline adjustments. Algorithms should flag shifts in behaviour, but a human engineer should authorise the new normal.

AI is undoubtedly a powerful engine for defence, and adopting it is necessary scale. But we must remember that it is only as secure, and as honest, as the fuel it runs on.

Recommended Reading

- "Adversarial Machine Learning Threats" - ENISA Report (2025)

- CISA Guidelines for Secure AI System Development - Extensive documentation mapping data integrity controls.

- "The Malicious Use of Artificial Intelligence" - Oxford Environmental Change Institute. An older but foundational text on offensive uses of AI.